Lead Essay

The End of Role Clarity

The idea of role clarity has come up in about 80% of the conversations I've had with teams and leaders. (By the way, all of the questions here follow Betteridge's Law of Headlines: if there's a question, the answer is no.)

- It comes up when there are disputes about propriety of a given action. Should so-and-so have done what they did?

- When there are questions about whether individuals can do their best work. Does so-and-so have a good idea of what's expected of them?

- When there are worries about people's ability to grow. Do managers know what it takes to become a leader?

- When execs wonder if they're hiring right. Are we confident this JD is what we actually need?

Role clarity, or the lack of it, gets blamed in every case.

The classic organizational psychology research (Robert Kahn and colleagues in 1964, John Rizzo and colleagues in 1970) treats role ambiguity as almost uniformly destructive: it lowers satisfaction; it reduces motivation; it drives emotional exhaustion. A Rutgers meta-analysis confirmed a moderate negative relationship between role ambiguity and job performance. This is the received wisdom, and I think it's increasingly wrong.

Eleven years ago I wrote about my dim view of this idea, and I truly believe knowledge workers can now leave it behind, break Jevons' Paradox, and kill Baumol's Cost Disease in the process.

Huh?

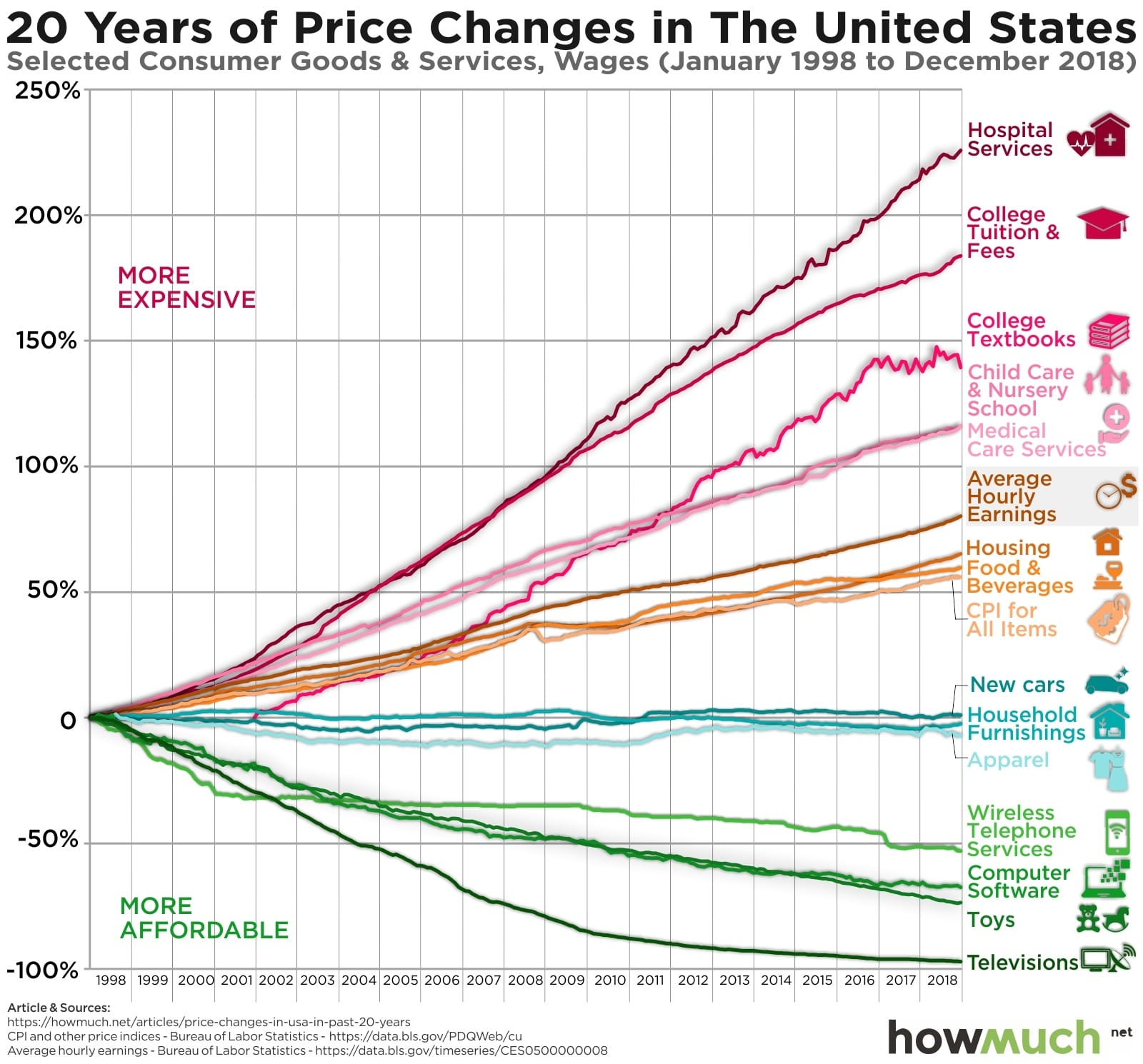

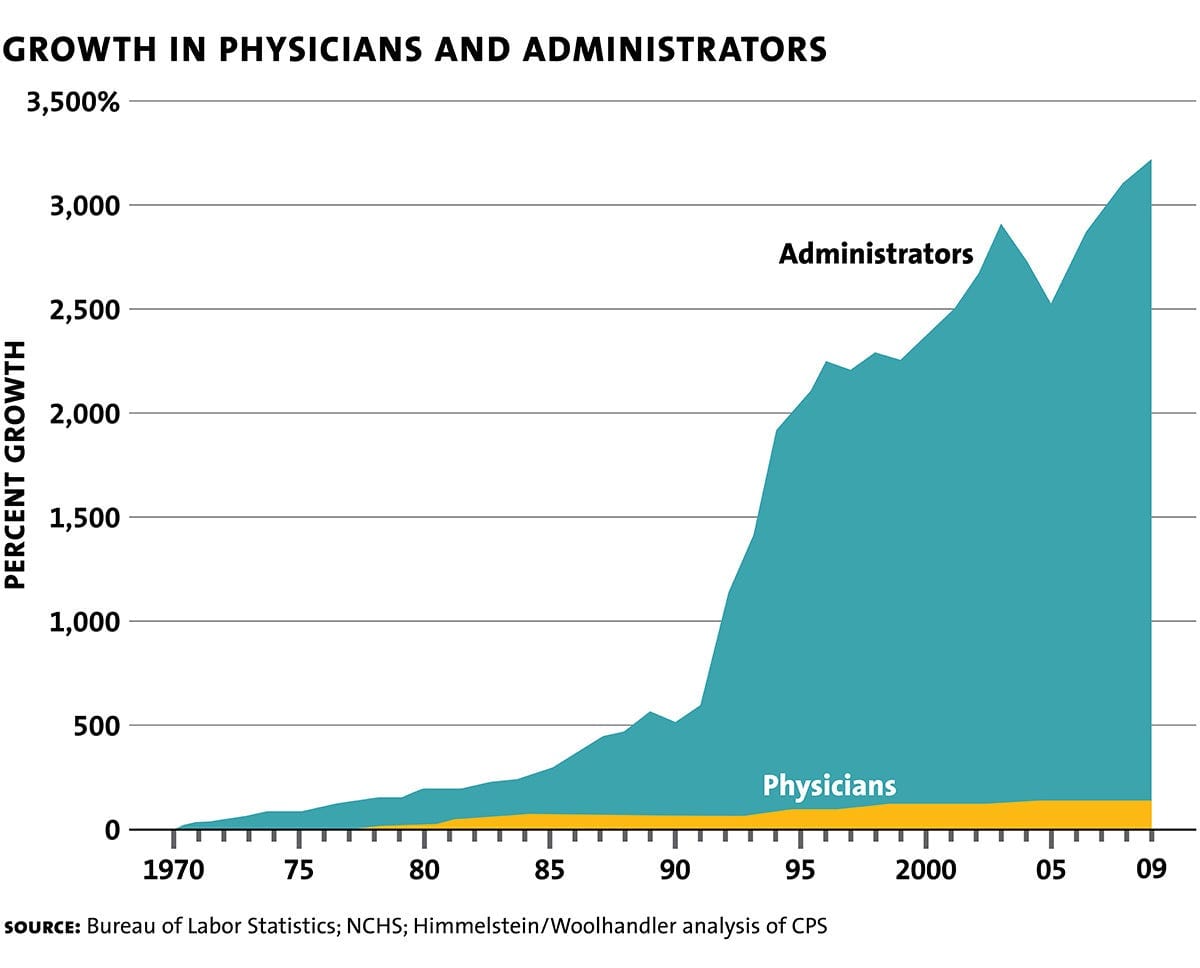

William Stanley Jevons observed in 1865 that Watt's more efficient steam engine actually massively increased coal consumption, because efficiency made coal viable in far more applications. This happened in knowledge work too, where collaborative tools that were supposed to simplify communication multiplied channels instead. Every time we make administration easier, we produce more administration: more meta-work about work.

Economist William Baumol noticed in the 1960s that some sectors just can't get more productive: a string quartet still needs four people and forty minutes to play Beethoven. Costs in those sectors rise anyway, because they have to compete for labor with sectors that are getting more productive. Management has been a Baumol sector, and that sucks for everyone involved, including (Bane voice) you, the people.

Yes, RACI made your healthcare more expensive.

So why can we leave role clarity behind?

TL;DR: AI lets us do a lot more with a lot less → every team can be smaller → smaller teams want and need less role clarity.

I'm not going to try to convince you whether the AI part of this argument is real. I know it is because I've seen it. If you're not yet there, fine! You can still believe that teams can and should be smaller.

I spoke recently with Michelle Peng at Charter about Hidden Patterns, and among many good questions, she asked: "What do you think companies will look like, how will they be organized, if they apply all of the ideas in the book?"

My expectation is that organizations will both be smaller and feel smaller, feel more local, even while achieving the same or better outcomes. They'll be made of networks of teams. Yes, those teams will in many cases be part of a bigger thing, but I think that'll feel more abstract and federated, and the thing you'll care about is the small(er) team around you.

Smaller teams need and want less clarity. There's more overlap between roles, more closeness with teammates, more exploration of what you can do, what they can do, and genuine optimism about what might be possible tomorrow. Obsessing over where I stop and where you pick up freezes what's possible.

Role clarity is a symptom of relational poverty

Richard Hackman's research found the optimal team size is roughly 4.6 members, because coordination costs grow exponentially with team size, and as a result he never allowed teams larger than six in his Harvard classes. Jennifer Mueller found that in larger teams, individuals perceive less available support, or "relational loss." Fewer people means more of each other.